Deepfake Images, Video & Voice Scams

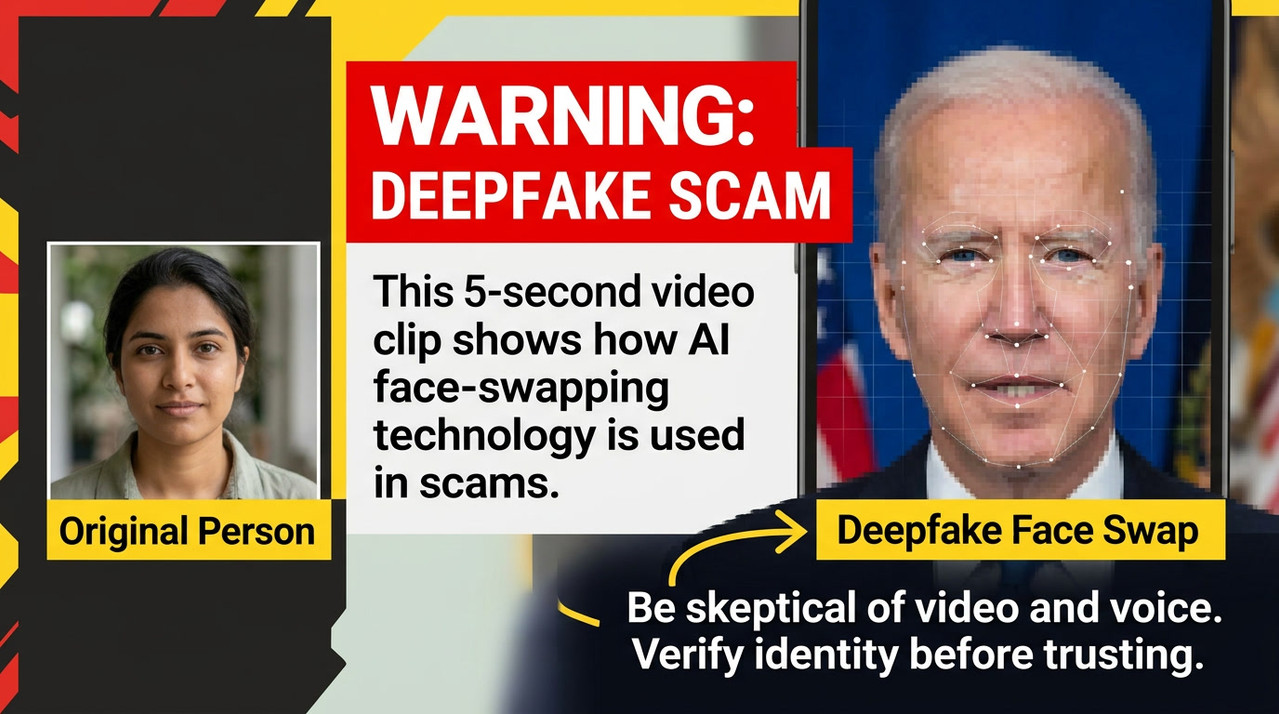

Deepfake scams use artificial intelligence to create fake images, videos, voices, identities and conversations that appear real enough to manipulate trust.

Key warning

Seeing or hearing someone is no longer enough proof that the message is real.

A cloned voice, fake video call, edited image or AI-generated profile can be used to pressure you into sending money, sharing information or trusting a false identity.

AI impersonation alert

“It sounded exactly like them.”

Deepfake scams can imitate a family member, celebrity, company leader, recruiter, investor, bank employee or public figure.

Common trick

“I’m in trouble. Please send money now and don’t tell anyone.”

Common fake proof

A realistic voice note, video call, photo, advert or social profile.

What is a deepfake scam?

A deepfake scam uses AI-generated or AI-manipulated media to make a person, message or identity appear genuine. This can include fake profile photos, altered videos, synthetic voices, cloned video calls, fake adverts or realistic-looking screenshots.

The aim is usually to create trust quickly. The scammer may pretend to be someone you know, someone famous, a trusted professional, a senior manager, a romantic interest, a recruiter, a bank employee or a family member in distress.

ScamAdvisory rule

If the request is urgent, emotional or financial, verify outside the conversation.

Why deepfakes are dangerous

Deepfakes attack one of the strongest human trust signals: recognition. If a person appears to sound, look or speak like someone you trust, your guard may drop.

Scammers use this to make fake requests feel real. They may create panic, secrecy, urgency or authority so you act before checking.

Common targets

- • Families receiving urgent calls from a cloned voice.

- • Businesses receiving fake executive payment instructions.

- • Investors seeing fake celebrity or expert endorsements.

- • Dating app users speaking to AI-generated identities.

- • Social media users trusting fake videos, images or adverts.

Images, video and voice can all be faked

The danger is not only the technology. The danger is the trust created by the fake content.

Deepfake images

AI-generated profile photos, fake identity documents, fake screenshots, fake lifestyle images or manipulated evidence.

Deepfake video

Fake videos showing celebrities, public figures, executives or trusted experts promoting scams or giving instructions.

Voice cloning

A cloned voice can imitate a loved one, boss, colleague, bank worker or official during an urgent phone call.

Family emergency scam

The caller sounds like a relative and claims they need money immediately for an accident, arrest, travel issue or crisis.

Business impersonation

A fake voice or video appears to come from a senior manager requesting payment, data, access or secrecy.

Fake investment endorsement

A deepfake advert uses a known person’s image or voice to promote a fake investment, crypto scheme or trading group.

Trust the request less than the face or voice

Deepfake scams are designed to make you focus on who the person appears to be, instead of what they are asking you to do.

Risk level

Critical

Urgency

You are pushed to act immediately, especially to send money, approve access or keep quiet.

Secrecy

They ask you not to tell family, colleagues, your bank or anyone who could verify the request.

Money request

The content leads to a transfer, gift card, crypto wallet, payment link or new bank details.

New contact route

The person suddenly contacts you from a new number, new account, new email or unfamiliar platform.

Small inconsistencies

The voice, lighting, lip movement, wording, timing, background or behaviour feels slightly wrong.

Sensitive information

They ask for passwords, codes, bank details, documents, private images or account access.

Verify the person through a separate trusted route.

End the call or pause the chat if money, secrecy, fear or urgency is involved.

Contact the person back using a phone number, email or method you already trust.

Use a family or workplace safe word for urgent money, travel, medical, access or payment requests.

For businesses, require two-person approval for payments, new payees, bank detail changes and urgent executive requests.

Do not rely on video, voice or screenshots alone. Verify the request, not just the appearance.

If you sent money or shared sensitive data, contact your bank, platform or organisation immediately.

ScamAdvisory

In the age of AI, verification matters more than recognition.

Deepfake images, video and voice can make fraud feel personal and believable. Slow down, use trusted contact routes, agree safe words, and never act on urgent requests without independent verification.